Optimising Semiconductor Chip Placement with Machine Learning

Project Reference :

AISG-100E-2018-010

Institution :

A*STAR (Bioinformatics Institute)

Principal Investigator :

Dr Lee Hwee Kuan

Technology Readiness :

5 (Technology validated in relevant environment)

Technology Categories :

AI - Deep Learning

Background/Problem Statement

The FPGA market was USD5.3 billion in 2016 and is expected to grow to USD9.5 billion by 2023 with a CAGR of 8.5%. The ASIC market was USD18.7 billion in 2017 and is forecasted to become USD35.2 billion by 2024 with a CAGR of 9.5%. Both FPGA and ASIC chips are widely used in consumer electronics as well as enterprise systems. Each of these chips consists of millions of transistors (depending on chip size and complexity)

Chip placement is the process of arranging different components on a chip before manufacturing. A chip placement consists of rectangular grids, with each grid containing sections of, or one or different modules. Due to the complexity of modern chips, optimising how to arrange different modules requires highly experienced integrated circuit engineers and is one of the pain points in chip design, not to mention expensive. Poor placement will lead to the product not meeting performance specifications and costly delays in the production schedule.

Solution

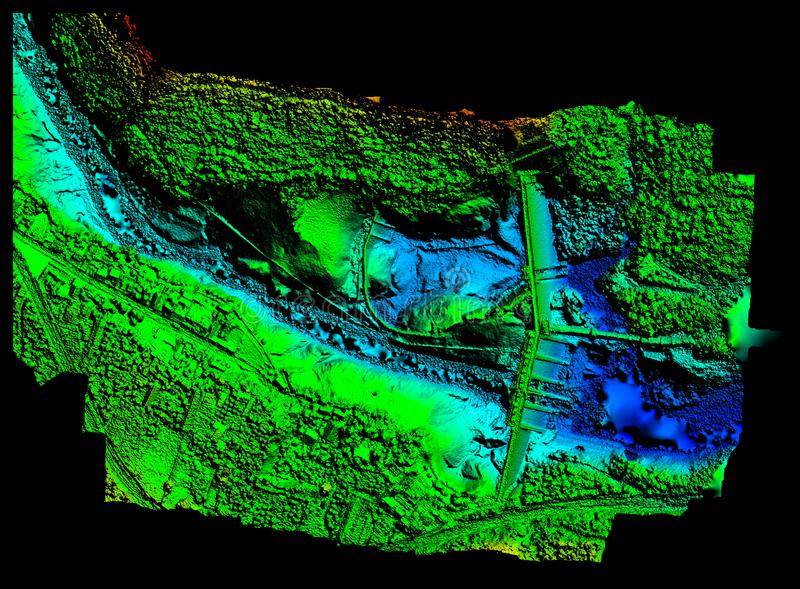

To solve this problem, an AI-based solution has been developed by considering the chip placement and routing as a special kind of image. The neural network learns the relationship between chip placement, routing and chip performance to improve the quality of chip placement. Standard FPGA measures are used as the metric for chip performance.

Benefits

- Speed up the deployment of 5G Internet by applying FPGA chip on new communication protocol

- Decrease the prototyping cost of new chip design

- Save time for new chip design in the placement and routing stage

Potential Application(s)

Apply visual image recognition on the placement stage of IC design, saving development time.

We welcome interest from the industry for collaboration/ co-development / customisation of the technology into a new product or service. If you have any enquiries or are keen to collaborate, please contact us.